Pete Hegseth C*ckblocks Anthropic

And OpenAI collects its winnings.

PN is supported by paid subscribers. Become one ⬇️

On Friday, Defense Secretary Pete Hegseth1 lashed out at the AI company Anthropic for daring to enforce its terms of service.

“Anthropic delivered a master class in arrogance and betrayal,” he snorted in a 300-word tweet, accusing the company of attempting to “seize veto power over the operational decisions of the United States military.”

Thoughts and prayers for the poor aide tasked with wiping down the spittle-flecked mirror after Hegseth finished drafting this clunker.

“I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security,” he fulminated, effectively slapping an “NSFW” on the company, at the very moment he was relying on its AI Claude to navigate the invasion of Iran.

“Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service,” he conceded, even as he bellowed that “America’s warfighters will never be held hostage by the ideological whims of Big Tech.”

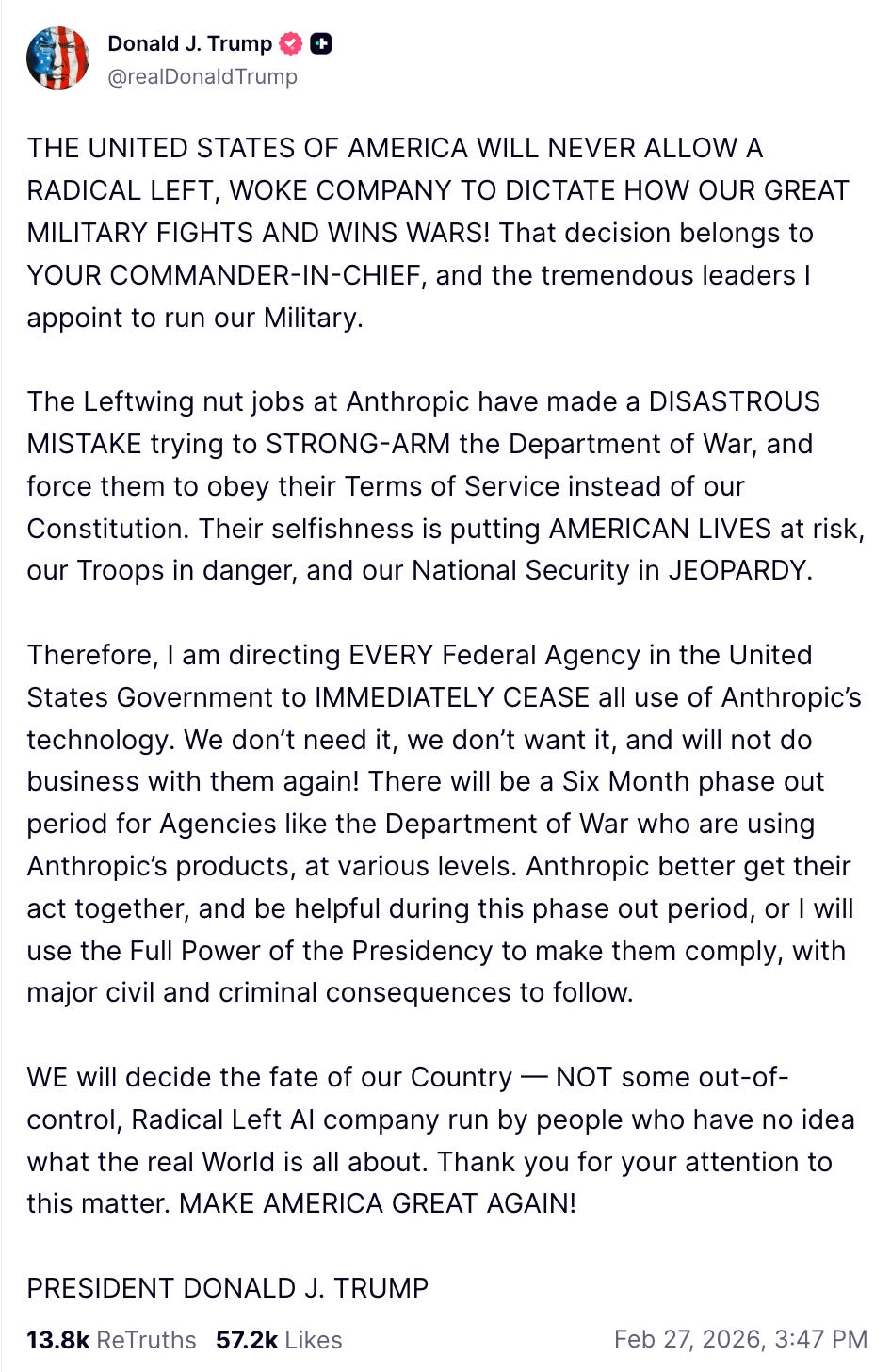

Over on Truth Social, the president screeched that the government didn’t need help from a “RADICAL LEFT, WOKE COMPANY,” while simultaneously threatening “major civil and criminal consequences” if the company doesn’t cooperate with the military for another six months.

Notably, neither Trump nor Hegseth wanted to talk about what exactly Anthropic refused to do for the military.

Lawful or “lawful?”

Chat, is it good when …?

Let’s not bury the lede here: The DoD wants to use AI for fully autonomous lethal systems and mass surveillance of American citizens. Anthropic said “no,” and now the US government is retaliating by trying to destroy it.

As for the first, it’s axiomatic that we should not allow machines to decide whether to kill people. The 1979 IBM Training Manual famously warned that “a computer can never be held accountable, therefore a computer must never make a management decision.”

And with AI’s gargantuan computing power, it would take just seconds to spin up a granular profile of any American from private data we turn over to the government and the digital data cloud we emit with every click.

Anthropic was founded by siblings Dario and Daniela Amodei, who left OpenAI after concluding that the world’s dominant AI company was prioritizing commercial growth over safety. Dario Amodei expressed discomfort after the Wall Street Journal reported that the DoD had used Claude “through Anthropic’s partnership with data company Palantir Technologies” in the raid to capture Venezuelan President Nicolás Maduro.

Hegseth’s sneering about “the sanctimonious rhetoric of effective altruism” is of a piece with his attitude toward “stupid rules of engagement.” He is, after all, the man who caught Trump’s attention through his zealous advocacy on behalf of war criminals.

Anthropic’s position was never that the military couldn’t use AI. In fact, this dispute arose over the terms of a $200 million deal with the Pentagon to integrate Claude into its operations. Hegseth lost his shit when Anthropic, the only AI company currently allowed into the federal government’s classified systems, started making noise about enforcing its own terms of service. In a statement released last Thursday, Dario Amodei wrote that “in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values,” citing mass domestic surveillance and fully autonomous weapons. Amodei mentioned Hegseth and company’s threats, but said they “do not change our position: we cannot in good conscience accede to their request.”

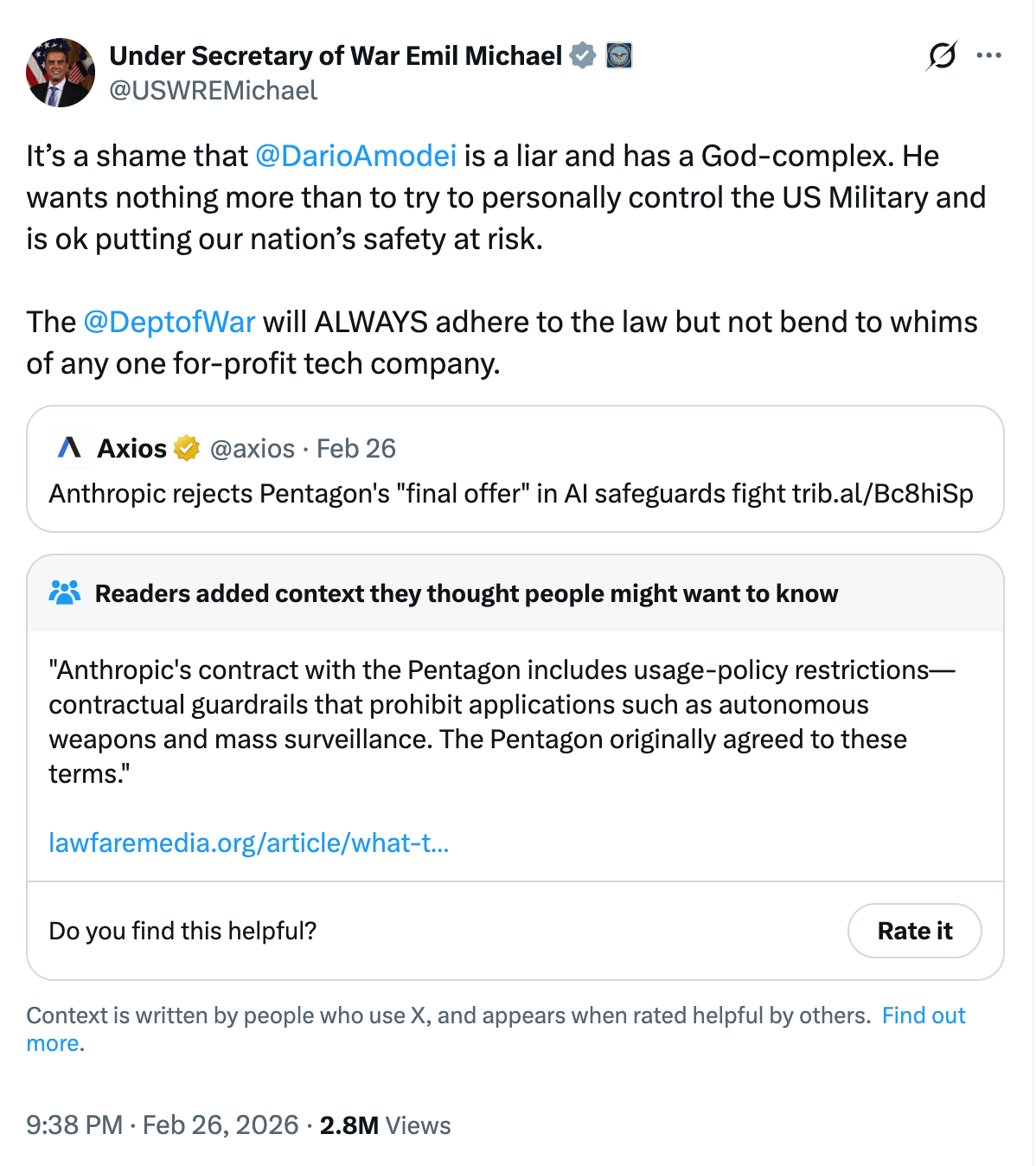

The meltdowns from the regime came the next day, with Hegseth insisting that anything other than "full, unrestricted access" to Anthropic's models is an attempt to “strong-arm the United States military into submission.” Defense Undersecretary Emil Michael, a Silicon Valley veteran negotiating on behalf of the Pentagon, tweeted that Amodei was a “liar” with a “God complex.”

To punish Anthropic for daring to insist on guardrails, Hegseth slapped a supply chain risk designation on the company, lumping it in with Huawei and Kaspersky, whose products are heavily restricted as possible vectors for spying by hostile foreign powers. That’s a potential death sentence, particularly since Hegseth insists that "no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

On social media, Trump ordered “EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology.”

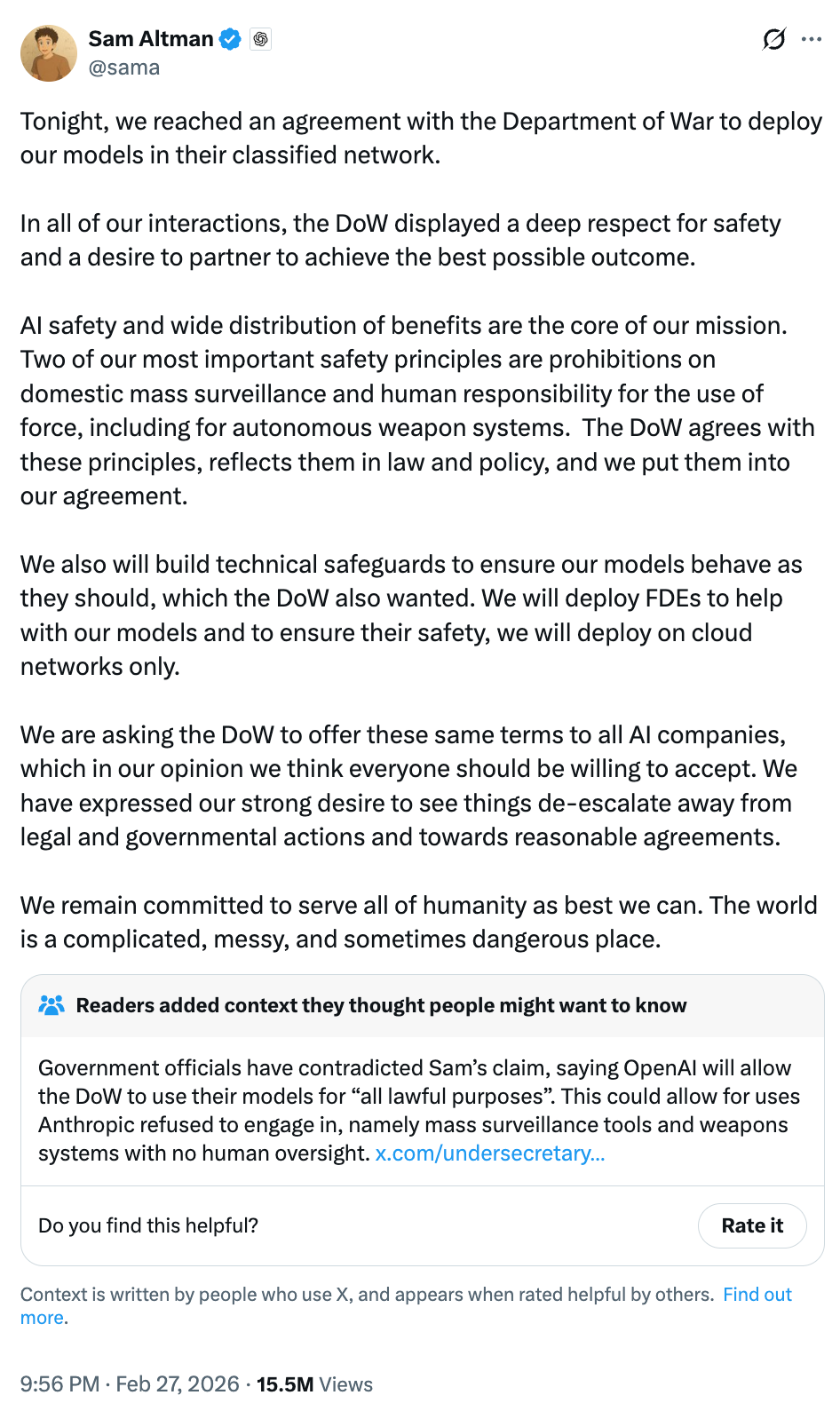

Sensing an opportunity, OpenAI CEO Sam Altman lurched forward to prove once again that he is exactly who his critics say he is.

“Yesterday we reached an agreement with the Pentagon for deploying advanced AI systems in classified environments, which we requested they also make available to all AI companies,” OpenAI crowed. “We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.”

But, as The Verge explains in detail, that’s not really true. An excerpt of the contract posted by OpenAI makes it clear that the only restraint on the DOJ is “the law.”

The Department of War may use the AI System for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols. The AI System will not be used to independently direct autonomous weapons in any case where law, regulation, or Department policy requires human control, nor will it be used to assume other high-stakes decisions that require approval by a human decisionmaker under the same authorities. Per DoD Directive 3000.09 (dtd 25 January 2023), any use of AI in autonomous and semi-autonomous systems must undergo rigorous verification, validation, and testing to ensure they perform as intended in realistic environments before deployment.

For intelligence activities, any handling of private information will comply with the Fourth Amendment, the National Security Act of 1947 and the Foreign Intelligence and Surveillance Act of 1978, Executive Order 12333, and applicable DoD directives requiring a defined foreign intelligence purpose. The AI System shall not be used for unconstrained monitoring of U.S. persons’ private information as consistent with these authorities. The system shall also not be used for domestic law-enforcement activities except as permitted by the Posse Comitatus Act and other applicable law.

This is a blank check predicated on a promise to follow the law endorsed by a Secretary of Defense who just illegally kneecapped OpenAI’s competitor. And not for nothing, but if your position is that it’s fine because the Trump administration says “Trust us!” you should probably close the chat window and think about your life choices.

Lawful or “lawful?”

“Whiskey” Pete has never been persnickety about statutory compliance, so its perhaps unsurprising that his tweets left observers confused about Anthropic’s legal status.

Hegseth appears to be relying his authority under 10 U.S.C. § 3252 to whack Anthropic. But, as Professor Alan Rozenshtein points out at Lawfare, § 3252 requires consultation “with procurement or other relevant officials,” informing Congress, and a “determination in writing” that the designation is “necessary to protect national security by reducing supply chain risk” and that “less intrusive measures are not reasonably available.”

Let’s take a wild shot in the dark that the paperwork there is a little shoddy (if it exists at all). And while the statute protects classified risk designations from court challenges, Hegseth’s 300-word rant blabbing that he’s retaliating against Anthropic for refusing to give him what he wants probably abrogates that immunity.

Anthropic says it intends to sue and will likely challenge the supply-chain risk designation as an ultra vires act — one taken outside the statutory authority Congress actually granted. The company also making its case to the public, which showed its approval by downloading the Anthropic app in record numbers last weekend. And in the Senate, Trump’s Republican allies are signaling that he needs to yank the reins on his hotheaded SecDef.

“They’re telling Anthropic that they should compromise their code of conduct to facilitate whatever it is Hegseth or somebody wants,” retiring Senator Thom Tillis told Politico. Even Republican stalwart Senator Mike Rounds is demanding briefing on the debacle.

Everything is culture war

Hegseth frames this as a showdown between patriots and “unelected tech executives.” In reality, it’s a gross abuse of power by an intemperate cable news host using national security law to destroy a company that dared to defy him. It’s also a signal to every government contractor that negotiating too hard may lead to being exiled from the federal marketplace.

Instead of developing a rational AI policy for the military, we get endless chest-thumping and social media dominance plays. The Trump administration folds the AI wars into the culture wars, which are being folded into a real war — one the government insists isn’t a war at all.

But casting OpenAI as the MAGA-coded model and Anthropic as the lib version is not a substitute for policymaking, and it fails to reckon with the very real issues this technology presents. Let’s not forget that Anthropic was perfectly willing to take government money to participate in this Iranian misadventure — it just didn’t want to be on the hook when the trigger got pulled. OpenAI took the deal, calculating that it could lock its rival out of the government market. There are no “good guys” here.

A pox on all their houses. Not in equal measures, but a pox nonetheless.

It’s not the “Department of War,” and you can’t make us pretend it is.

That’s it for today

We’ll be back with more tomorrow. If you appreciate today’s PN, please do your part to keep us free by signing up for a paid subscription.

Thanks for reading, and for your support.

Anthropic CEO Dario Amodei refused Pentagon demands to remove restrictions preventing Claude’s use for mass domestic surveillance and fully autonomous weapons. OpenAI signed the deal Anthropic rejected, proving exactly the framework that’s playing out: American AI companies increasingly function as state surveillance apparatus, with those refusing co-option facing existential retaliation.

Here’s my hope, and what I’m watching: Europe poaches Anthropic entirely. The values Amodei defended—no mass surveillance, no autonomous weapons, human oversight—align precisely with the EU AI Act’s framework, not America’s authoritarian trajectory.

If Anthropic relocates to Europe, bringing its researchers, capital, and institutional knowledge, it accelerates the brain drain I documented and strengthens the EU’s “trustworthy AI” alternative exactly when democracies need proof that rights-respecting technology can compete. This isn’t just one company’s dispute with Pentagon procurement…it’s a test of whether democratic AI governance remains viable, or whether surveillance capitalism and techno-fascism win because authoritarian models operate without moral constraints.

I published The World Ahead 2026: Part 4, mapping three incompatible AI governance frameworks: the US model (surveillance weaponized for control), China’s model (purpose-built for authoritarianism), and the EU’s experiment in trustworthy AI.

The answer determines whether democracy survives the AI transition. One big tech company is pondering whether to fight back…

Listen: Hegseth is now telling the troops that he is leading the world into Armaggedon! As in, the End Of The World. THIS should be the single most urgent, frightening, thing on the table today! If Hegseth, the head of our armed forces, has his way, there ain't gonna BE any "A.I.", or any more US, as in, living things!